|

Article Type:

|

How To

|

|

Product:

|

Symphony

|

|

Product Version:

|

6.13

|

|

Component:

|

Analytics Engine

|

|

Device Brands:

|

|

|

Created:

|

30-Jul-2014 12:42:46 PM

|

|

Last Updated:

|

|

VE180 Indoor People Tracking Configuration Options

Overview

A number of additional advanced settings are configurable for the Symphony VE180 analytic specific to Indoor People Tracking (Server Configuration > Devices > Analytics Configuration > Overview > Task: Indoor People Tracking). The following sections describe these settings in detail.

IMPORTANT: These settings are for experts only, and the values should be changed only in cases where the analytic engine is not functioning as expected.

Environment subtab

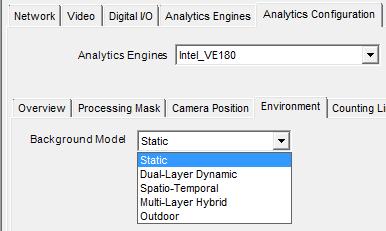

Background Model

When you specify the Indoor People Tracking task for the VE180, you can then specify the Background Model in which your camera will be recording images (Server Configuration > Devices > Analytics Configuration > Environment subtab).

| Background model |

When to use |

| Static |

Use where the background is relatively static (no periodic movement in the background such as swaying trees). This is the fastest background model. |

| Dual Layer Dynamic |

Capable of tracking objects that are stationary for short periods of time.

For example, use in areas where you want to track people standing still for 30 to 60 seconds.

|

| Spatio-Temporal |

Tracks only consistent motion, thus it can greatly reduce false alarms due to tree branches and other objects in the background that move randomly because of wind. |

| Multi-Layer Hybrid |

Excellent all-purpose Indoor People Tracking background model.

Uses color, brightness, and texture information. This background model can also use multi-scale processing to fuse information from different scales.

You can customize to different scenarios by adjusting the sensitivity to intensity, color, or texture information in a given scene.

|

| Outdoor |

Designed for dynamic, weather-affected outdoor environments with considerable lighting inconsistencies due to sun or cloud shifts or object movement due to wind. People standing still are not tracked. |

Once you select a Background Model, you can then configure additional environmental settings. The settings common to all background models are described in the Senstar Symphony Online Help. This knowledge base article describes the model-specific settings in detail.

Additional Environmental Subtab Options Per Background Model

The following additional environmental options are available per background model.

Static

| Option |

Description |

| Shadow/Illumination Removal |

| Shadow Sensitivity |

Controls how aggressively shadows (decreases in lighting) are ignored when tracking moving objects. Disabled by default.

When enabled, increase the slider (to the right) to allow the engine to correctly ignore more of the shadow areas.

Note: Ignoring more shadow areas increases the potential of a person wearing dark clothing on a light background to be incorrectly classified as a shadow.

|

| Illumination Sensitivity |

Controls how aggressively increases in lighting are ignored. Disabled by default.

When enabled, increases in lighting occur due to a light source intensifying, such as a car headlight beam shining on an area, or an overcast day becoming a sunny day.

Increase the slider (to the right) to allow the engine to correctly ignore any increases in illumination.

Note: Ignorning lighting increases also increases the potential of a person wearing light clothing on a dark background to be incorrectly classified as an illumination increase.

|

| Track Slow Moving Objects |

| Track Slow Moving Objects |

Tracks an object moving very slowly across the video, or an object coming directly toward or moving directly away from the camera (which will appear to be moving slowly).

Disabled by default. When disabled, slow moving objects tend to become part of the background and will not be tracked.

When enabled, slow moving objects will be tracked.

Important: This option might increase some false alarms for situations where an object stands still in the video for a long time.

|

| Object Speed |

Speed of the tracked object. |

| Remove ghost Pixels |

Any pixels that do not change in value for some time are not considered as foreground. Enabled by default. |

Dual-Layer Dynamic

| Object |

Description |

| Sensitivity |

| Appearance |

Manual, Bright shiny, Grey matted |

| Lower bound |

Specifies a lower threshold of how different the object has to be before a different color is detected. Available when Manual selected. |

| Upper bound |

Specifies an upper thresold of how different the object has to be before a different color is detected. Available when Manual selected. |

|

Timing

The Dual-Layer Dynamic and Multi-Layer Hybrid background models interprets a scene using two representations: short-term and long-term. Short-term representation are objects in the scene that have recently moved or just arrived in the scene. These are the objects that will be tracked.

Long-term representation are background objects that are not going to be tracked. Occasionally, some objects will move from short-term representation to long-term representation. Also at some point the short-term representations and long-term representations must be deleted because it has changed over time.

|

| Time to clear long-term representation (seconds) |

The time to delete a long-term representation that is not currently visible.

Example scenario using a car parking lot:

A car left the parking spot. How long do you want the analytic to remember that it was there? If the same car comes back and parks in the same spot, and the old background is still remembered, then it will just be background instantly.

|

| Time to clear short-term representation (seconds) |

The time in which stationary objects are reported as tracked objects, or the expected maximum dwell time for stationary objects.

Considerations:

Sometimes the background is changing between several states, for example, like a neon sign switching on an off. If the period of the oscillation is considered (seconds), the analytic can potentially learn both lighting states as background. On the other hand, if you have a scene where people in dark clothes are always passing by in the same spot and the time to clear is set for too many seconds, the dark clothes at this location might be seen as an intermittent feature of the background.

|

| Time to move short-term to long-term representation (seconds) |

The time to delete the representation of objects that pass through the scene.

Many objects will move through the scene over time. The analytic learns these objects as part of the short-term representation. However, once they move (away from a spot or away from the scene), these past representations can be cleared from memory.

|

Spatio-Temporal

| Option |

Description |

| Model Specific |

| Mode |

The background model tracks consistent motion, automatically detects abnormal behavior, or tracks motion only in a specified direction as follows:

- Coherent motion – Tracks consistent motion; therefore, greatly reduces false alarms due to inconsistent motion such as tree branches and other objects in the background that can move randomly due to the wind. Set by default.

- Abnormal behavior – Background model learns the normal patterns and directions of motion at each pixel; therefore, any abnormal direction of motion will be detected.

- Wrong direction – Tracks motion only in a specified direction; therefore, motion in any other direction will be ignored.

|

| Appearance Marginalization |

Only detects motion patterns and is not influenced by the appearances. Enabled by default. |

| Bg Frames |

Specify background frames. Available when Abnormal Behavior selected. |

| Fg Frames |

Specify foreground frames. Available when Abnormal Behavior selected. |

| Direction |

If Wrong Direction selected. Read-only. |

Multi-Layer Hybrid

| Option |

Description |

|

Timing - see the Dual Layer Dynamic Background Model descriptions for details on:

Time to clear long-term representation (seconds)

Time to clear short-term representation (seconds)

Time to move long-term representation (seconds)

|

| Sensitivity |

| Sensitivity |

Controls how sensitive the background model is to perceived changes from the expected background values. Evaluates up to four (4) features (and at least one feature should be selected):

- Brightness (from black to white)

- Thermal

- Color (red, blue, gray, etc.)

- Texture (local patterns of brightness)

If sensitivity is low, changes are attrbuted to natural variations in the appearance of the background. If sensitivity is high, changes are attributed to a foreground object.

|

| Brightness |

Looks for changes in gray-level (from black to white).

Enabled by default, but the appropriate sensitivity depends on how much the lighting in the scene varies over time.

|

| Thermal |

This option gives equal importance to intensity differences across the whole range of possible values. Disabled by default. |

| Color |

The engine looks for change in hue and saturation. Enabled by default.

Color is usually not as strongly affected by lighting, but not all objects can be distinguished from the background by color alone.

This option requires more CPU time than Brightness.

|

| Texture |

The engine looks for changes in the local brightness pattern, especially new edges. Disabled by default.

Texture is generally less affected by lighting, but flatter objects may not have enough texture to be distinguished from the background.

This option requires more CPU time than Color.

|

| Multiscale Processing |

Select this option to monitor for changes at multiple spatial resolutions. Disabled by default.

This option can improve accuracy for difficult scenes (especially in combination with Texture features), but it also increases CPU load.

|

| Adaptation |

|

Adaptation

Time to adapt representations to show changes (seconds)

|

Controls how quickly the background model can adapt to slow changes in the scene (such as the sun going down).

Use a large value if you need to detect slow changes in the scene.

Use a small value if the scene contains many gradual but relatively quick changes in lighting.

|

Advanced subtab

The Indoor People Tracking task for the VE180 includes additional advanced configuration options that can be adjusted if needed via Server Configuration > Devices > Analytics Configuration > Advanced subtab.

IMPORTANT: In most cases, the default values for these advanced settings should be sufficient. These settings are for experts only, and the values should be changed only in cases where the analytic engine is not functioning as expected.

| Option |

Description |

| Processing Delay |

The number of frames the tracker needs to run to create a buffer before tracking the live images. Set to 0 by default. |

| Dwell Time |

| Show after (seconds) |

The number of seconds that objects have been dwelling, if they have been dwelling for at least x seconds. Disabled by default. |

| Motion limit (% of size) |

Defines how much an object can move and still be considered to be dwelling. Horizontal and Vertical are calculated as a percent of object size that the object can move.

Examples:

In an Uncalibrated Camera Position (via the Overview subtab), Horizontal 50 means an object can move up to 50% of its max height & width horizontally before it is considered to have moved. This is similar for Vertical.

For calibrated Camera Position (Angled, Overhead), Horizontal sets the percentage of height that the object can move on the ground plane. Vertical is ignored.

|

| Tracking |

| Total number of proposals per iteration |

Defines how much analysis the algorithm is allowed, measured in frames.

Indoor only

|

| Max proposals per object |

Defines how much analysis the algorithm is allowed, measured in objects (if there are few objects).

Indoor only

|

| System Temperature |

Defines how willing the system is to think about moves that do not immediately improve the scene model’s correspondence with the available evidence.

Indoor only

|

| Object Appearance |

| Color blocks |

Stores the average color value at particular locations on the object. Enabled by default. |

| Color histogram |

Stores the rough distribution of pixel color across the object. Disabled by default. |

| Sizes and Distances |

| New Object Min Size (pixels) |

Defines the smallest object size to detect and track. |

| New Object Min Travel Distance (meters) |

Defines how far the object needs to travel before the user sees it appear on the screen within the camera view.

For example, a stationary tree may sway in a breeze, but its movement would not register the tree as an object to track so long as it is moving a distance smaller than the New Object Min Travel Distance value.

|

| New Object Min Travel Distance (pixels) |

| Hidden Object Max Jump Distance (meters) |

Defines the maximum distance an object that just appeared in the scene can move between two consecutive frames, where the object has not been reported as a tracked object. |

| Hidden Object Max Duration (seconds) |

Defines how long to keep a previously tracked object hidden in the scene.

Keeping the object hidden can allow for re-tracking that same object when the object becomes occluded for a short period of time.

|

| General Proposers |

| Add an object |

Tries to add new objects to the scene. If this not enabled, no objects will ever be tracked. |

| Remove an object |

Removes existing objects. If this not enabled, existing objects can’t be removed. |

| Swap two objects' positions |

Swaps positions of two nearby objects. Disabled by default. |

| Swap two objects' depths |

Swaps the distance of two nearby objects from the camera only. Disabled by default. |

| Adapt an object |

Changes the object’s position to better fit the data. Disabled by default. |

| Tracking Proposers |

| Color Blocks |

Finds a new position based on color block information. Enabled by default. |

| Color Histogram |

Finds a new position based on color histogram information. Disabled by default. |

| Contours |

Finds a new position based on the object’s outline. Disabled by default. |

| Sparse Features |

Finds a new position based on local texture points. Disabled by default. |

| Foreground |

Finds a new position that aligns the object with detected foreground. Enabled by default. |

| Motion Dynamics |

Finds a new position, randomly, based on how it moved in previous frames. Enabled by default. |

|

Average rating:

|

|

|

|

Please log in to rate.

|

|

Rated by 0, Viewed by 6103

|

|